SignalR on Kubernetes

- Technology

Written by Samuel Fisher, a Developer at G-Research

SignalR is an extension to the ASP.NET Core framework that makes it easy to write real-time applications where the server can push messages out to clients. Examples of where this might be used are in real-time dashboards or chat apps.

Kubernetes makes it easy to deploy apps and scale them to support more users than a single server could handle. SignalR and Kubernetes are therefore a great combination when creating real-time applications supporting many users.

At G-Research I have been working on apps that do exactly this. For high availability, we have multiple copies of each service running (in Kubernetes terminology, each of these is called a pod) and I encountered some extra steps that are required to get everything working smoothly.

Negotiation

SignalR supports multiple transports such as WebSockets, Server-Sent Events and Long Polling. Some of these transports are short-lived and require the client to make a new request to the server periodically. When a client does this, the server needs to know if it’s a new client, or if it’s an existing client connecting again.

To help identify client requests, SignalR uses a Connection ID (or Connection Token, depending on which version of SignalR is being used) which is assigned in a negotiation step that takes place before the actual SignalR connection is established.

Using a negotiation step results in two calls to the service:

- Negotiate

- Connect

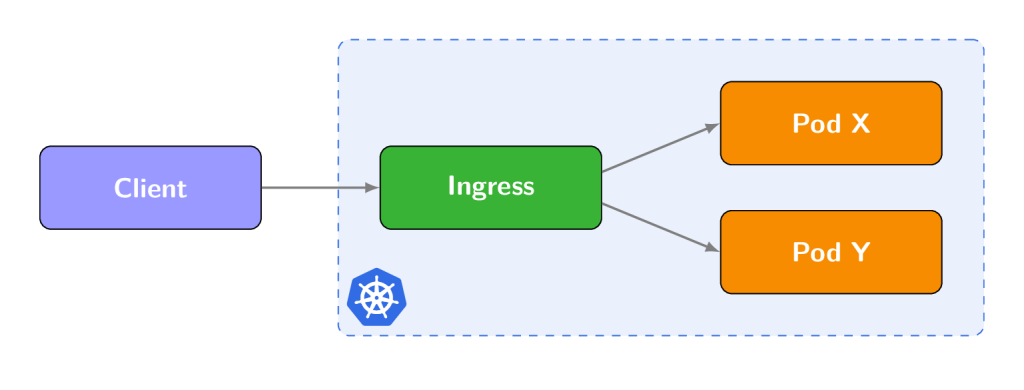

When running services on Kubernetes behind an Ingress, the ingress controller often balances requests between each pod to maintain an even traffic load. This means that sequential requests are likely to be sent to a different pod.

In the second request (connect), the client presents the Connection ID it was given by the server in the response to the first request. A problem arises here if the connect request is sent to a different pod than the negotiate request, because that pod will not recognise the Connection ID. If this happens, the server returns 404 Not Found and the SignalR connection fails.

Skip Negotiation

We can overcome this issue by forcing the client to use the WebSockets transport and asking it to skip the negotiation step. WebSockets is the only transport that can skip negotiation because it is long lived and does not need a Connection ID.

This means that once the connection is established, the client will stay connected and communicate with the same pod throughout the lifetime of the SignalR connection. Since no further HTTP requests are made, there is no risk of subsequent requests being routed to a different pod.

This is a client-side setting and can be enabled with the following options when creating the hub connection.

In C#:

var connection = new HubConnectionBuilder()

.WithUrl("https://example.com/myHub", options => {

options.SkipNegotiation = true;

options.Transport = SignalR.HttpTransportType.WebSockets;

})

.Build();

In Javascript:

let connection = new signalR.HubConnectionBuilder()

.withUrl("https://example.com/myHub", {

skipNegotiation: true,

transport: signalR.HttpTransportType.WebSockets

})

.build();

There are other ways in which this problem can be solved, such as configuring sticky sessions on the ingress controller itself.

Scaling Out

The second issue is that each copy of the service running in a separate pod will only be aware of SignalR clients connected directly to that pod, and will not be able to send messages to clients connected to other pods.

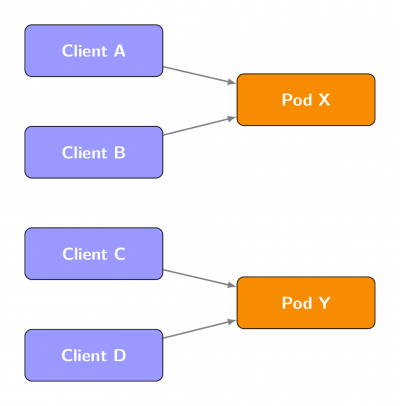

To demonstrate the problem, suppose there are four clients and two pods connected as follows:

When running the following code we would expect a message to be sent to all connected clients:

Clients.All.SendAsync("SomeMessage", "SomeValue");

However, if we run this on Pod X, only Client A and Client B will receive the message. This is because Pod X doesn’t know about Clients C and D since they are connected to a different pod.

Backplane

To solve this issue, we need to use a backplane. This allows SignalR to communicate between different instances of the service to ensure that each is able to send messages to all clients, not just those connected directly to it.

In the above example, if Pod X and Pod Y were configured to use a shared backplane, the message would be received by all four clients, not just Clients A and B.

The Redis backplane is one option for doing this. By enabling it, SignalR communicates with other pods via a common Redis instance. This requires a copy of Redis to be running on your Kubernetes cluster. For information on how to do this, see deploying an application with Redis.

To enable the Redis backplane, call AddStackExchangeRedis in Startup.ConfigureServices:

services.AddSignalR().AddStackExchangeRedis("<connection_string>");

Conclusion

By making a few configuration changes to how SignalR is set up, we can ensure that SignalR services running on Kubernetes behave correctly and can communicate with all clients.

For more information on this topic, see Host and Scale on the ASP.NET Core project site.